Introduction

AI writing tools like ChatGPT, Claude, and Gemini have changed the way students approach assignments. Schools and universities are under the pressure to maintain academic integrity and Turnitin has become their solution. But just how accurate is Turnitin AI detection accuracy? This article dissects the many myths, the actual facts that have been supported by research and what the new data actually represents so that students and teachers can make informed, fair judgments.

Table of Contents

What Is Turnitin’s AI Detection and How Does It Work?

In April 2023, Turnitin introduced its AI writing detector. By June 2024, it already had over 250 million paper submissions in over 16,000 institutions in 185 countries.

What should be realized is that Turnitin does not match your text with a database of AI-generated writing. Rather, it examines the statistical trends of what you write about.

The Two Key Signals It Looks For

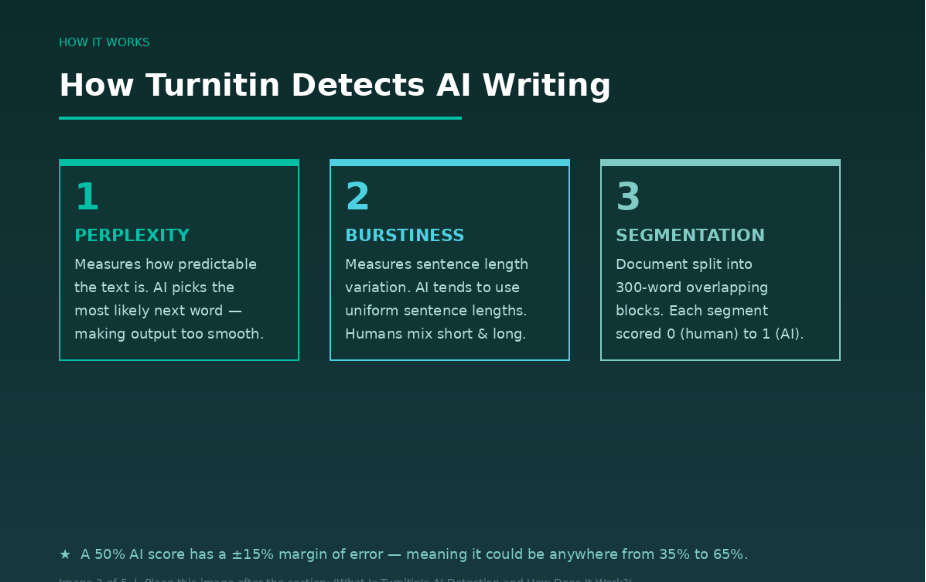

Perplexity and burstiness are two largest signs of AI writing. Perplexity is a metric used to measure how predictable your writing is – AI models are trained to select the most statistically likely next word, which results in their output being smooth but generally uninteresting. Burstiness is associated with rhythm and flow – human text has a natural mix of short and punchy sentences and longer and more descriptive sentences, whereas AI models tend to generate equally-long sentences.

Turnitin divides your paper into 300-word steps and analyzes them separately with a language model that is trained to tell the patterns between human and AI writing. In case it indicates 3 out of 10 segments, the student is awarded 30% AI score.

What Turnitin Claims vs. What Research Shows

Turnitin unofficially reports 98 percent success on detecting AI-written content, and a false positive rate of less than 1 percent on writing with at least 20 percent AI content.

But the real life scenario is more complex.

Turnitin admits important information about itself, in that their own product officer acknowledges that they purposely identify approximately 85 per cent of AI-generated content, and intentionally leave 15 per cent unidentified, so that they can limit false positives to under 1 per cent. It is a strategic trade-off – fewer AI texts will be caught, and innocent students will not be wrongly accused.

The independent studies have a different picture:

In a more in-depth analysis, it was discovered that 93% of totally human-generated texts were correctly recognized, 77% of totally AI-generated texts were correctly recognized, and the overall success rate of identifying any AI presence was 86%.

However, Turnitin rightly identified disguised AI-generated texts only 63% of the time when AI-generated text was disguised manually or heavily edited.

Turnitin admits a range of scores between 15 percentage points, and thus a score of 50% AI may be perfectly valid as a range of 35 to 65.

Common Myths about Turnitin AI Detection

Myth 1: Turnitin Can Detect All AI-Generated Content

This is false. The AI checker of Turnitin fails to detect about 15 percent of AI-written text in a document. The rates of detection decrease even further in case a student edits or paraphrases the AI output.

Myth 2: A High AI Score Indicates that the Student cheated.

A good score does not indicate cheating, it is an indicator to be investigated. Cost in the context of academic integrity is high – false positive can be very damaging as a student. This is why some scholars say it should be used conservatively: the results of AI detection should be perceived as a hint, which should be inspected by humans, but not as a final decision.

Myth 3: Turnitin Is 100% Right.

None of the AI detectors is completely accurate. The range of detectors is 60 to 90 percent accurate, with false positives, non-native writing bias, and susceptibility to paraphrased/hybrid text being the most common problems.

Myth 4: Turnitin Clicks through a Database of AI Text.

As explained earlier, Turnitin does not use a database of AI content. It does not have any AI-generating text database to compare. Rather, it relies on a transformer deep-learning model that has been trained to create the statistical fingerprint of the large language models present in text.

Myth 5: Turnitin can be completely avoided by simple paraphrasing.

In July 2024, Turnitin introduced the AIR-1-powered paraphrase highlighting model which is meant to identify the statistical fingerprint of AI rewriting and AI paraphrasing models. It will no longer be deceived by simple word replacing.

The Real Facts about Turnitin AI Detection

This is what is actually supported by the evidence:

Fact 1: There are higher rates of false positive than are stated. Independent research indicates 2-5 percent false positive in practice. False positive rates are approximately 4 percent at the sentence level and a review conducted by the Washington Post had a false positive rate of up to 50 percent in small scale tests.

Fact 2: Any document less than 300 words cannot be relied upon. Turnitin raised its limit of 150 words to 300 words to be analyzed using its AI detection system, observing that the more the text, the more accurate it will be.

Fact 3: Detection is different among AI models. It turns out that there is a large difference in testing among AI platforms, with GPT-4 and Google Gemini having an accuracy of 98-100% and Claude being more unstable, with 53-68% of the versions being detected.

Fact 4: It is not a decision but a flag. The company recognizes that its tool makes data available to educators to make well-informed choices, and not to determine academic misconduct per se.

Fact 5: Turnitin continues to renew its models. In December 2023 Turnitin released an improved and more modern model, AIW-2, to replace the original AIW-1. Its evolution is ongoing with the development of AI writing tools.

The False Positive Problem: Who Is Most at Risk?

One of the most worrisome aspects of Turnitin is false positives, i.e., human writing being detected as AI.

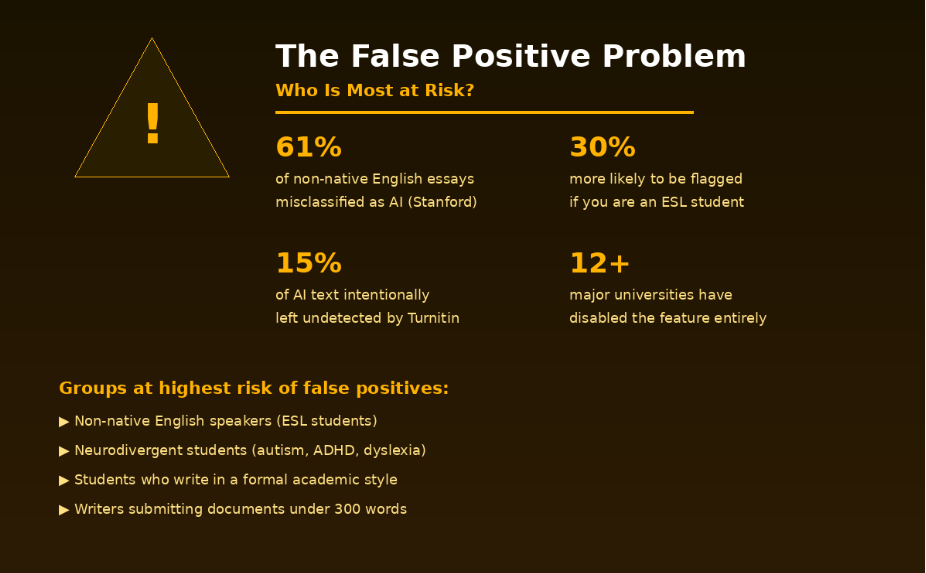

Stanford University researchers experimented with seven AI detectors on non-native English essay writers. The findings were quite scary: AI detectors misunderstood 61% of the essays that were written by non-native speakers of English as AI-written, and almost none of the essays written by native speakers of English were falsely detected.

According to 2024 studies, ESL submissions are disproportionately falsely flagged by 30 or more. The Markup stated that international students are worried that false accusations may lead to loss of merit scholarships, academic records, and even visa status.

Neurodivergent students face similar risks. A 2024 peer-reviewed chapter found that neurodivergent writers — including those with autism, ADHD, and dyslexia — are among the groups most likely to be impacted by false positives, due to their reliance on repeated phrases, consistent terminology, and pattern-based composition.

Trained students writing in a formal, structured academic style will also exhibit low perplexity and burstiness, which Turnitin associates with AI. In other words, writing well can sometimes work against you.

How Educators Should Use Turnitin AI Detection

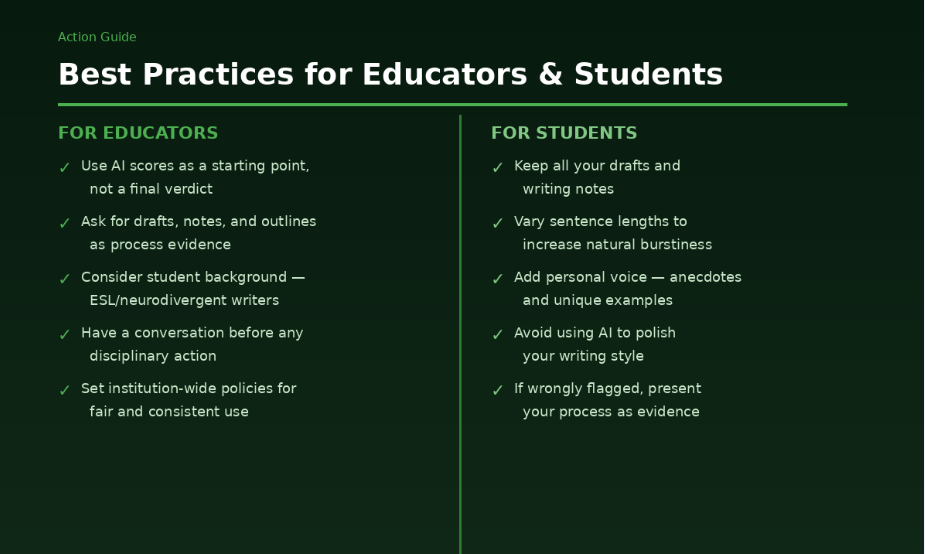

The more education-centered institutions in their attitude towards AI are less likely to experience adversarial interactions and have more successful student results. One of the tools that should be used, along with other tools like process-based assessment, oral check-ins, and domain-specific tasks, is AI detection.

The following are steps that educators need to take:

- Request drafts and notes. A single AI score is much less reliable than process evidence.

- Converse first. Discuss with the student, and then act without disciplinary measures.

- Establish a reasonable limit. Most institutions would regard scores below 20 percent as low risk.

- Take into account the background of the student. Neurodivergent and ESL learners should be given special attention.

At least 12 large universities (Yale, Johns Hopkins, Vanderbilt, and Waterloo) have either disabled the AI detection option altogether or blocked it, claiming bias and inaccurate charges.

How Students Can Protect Themselves

You need not fear Turnitin in case you write your own work. Nevertheless, it is good to be ready.

- Keep everything you write and write down as evidence of your writing process.

- Switch sentences. Couple of short sentences with longer sentences naturally enhances burstiness.

- Add your own voice. Examples, anecdotes, personal analysis are hard to be identified by AI detectors.

- Do not use AI to edit your writing. The slightest request to ChatGPT, such as making your text more understandable, can alter the statistical trends of your text to raise a flag.

- In case of false flagging, seek an appeal and provide your drafts, outlines and research notes as a testament.

The Future of AI Detection in Education

In the case of AI generators and AI detectors it is a game of perpetual give and take where each side improves as time progresses – just like cybercriminals and security researchers.

With the 2025 AI models generating more varied and higher entropy text, signature patterns are less pronounced, so they will be more difficult to detect in the future. To remain relevant Turnitin will be forced to continue changing its models.

The gist is that no tool would substitute human judgment. Detection scores are not conclusive; they should initiate a discussion.

Conclusion

Turnitin is a great tool, yet it is not without its faults. To comprehend the Turnitin AI detection accuracy, it is necessary to learn both its benefits and its actual limitations. It is useful on long, unedited AI texts but does not perform well with short texts, edited texts, and non-native writing. False positives are a fact of life and are recorded to be detrimental to innocent students. Teachers ought to use detection scores as a point of departure to be investigated, but never as a final decision. And students need to pay attention to write in a natural way, retain their drafts and realise that a flag is not a guilty verdict.

FAQs

There are 5 FAQs about Turnitin AI detection accuracy.

Q1. What is Turnitin’s AI detection accuracy rate?

Turnitin itself states an accuracy of 98 percent, whereas independent research indicates an actual on-the-job rate ranges between 77-86 percent in fully AI-generated text, and much lower in edited or mixed work.

Q2. Is Turnitin able to detect ChatGPT-written essays?

Yes. GPT-4 is always found with 98-100 percent accuracy in a situation where the text has not been edited or paraphrased extensively.

Q3. Is Turnitin more flagging of non-native speakers of English?

Yes. According to a study at the Stanford University, AI detectors falsely labeled 61% of the non-native English essays as AI-generated.

Q4. Is it possible to contest a Turnitin AI detection?

Yes. The majority of institutions permit students to challenge a flag by providing process evidence, including drafts, outlines and notes. It is always best to save your work at each step.

Q5. What percentage on Turnitin implies the use of AI?

Most institutions have a general low-risk score of less than 20. The Turnitin itself only shows an asterisk in that range of scores, which is less reliable. Above 20% can lead to an instructor review.